LangChain is one of the best platforms for developers pursuing to create LLM-powered applications. It makes it easier to organize enormous amounts of data so that LLMs can access it quickly and enables LLM models to generate responses based on the most recent data that is available online.

What is LangChain?

An open-source framework called LangChain is used to create applications that make use of large language models (LLMs). Large deep learning models, or LLMs, are extensively pre-trained on copious amounts of data. They can produce answers to user inquiries, like providing answers to queries or producing images in response to text-based prompts.

It is similar to getting a complete response for a single request when LLMs (like GPT3 or GPT4) provide a completion for a single prompt. You could instruct the LLM to “create a sculpture,” for instance, and it would comply. More complex instructions, like “create a sculpture of an axolotl at the bottom of a lake,” are also acceptable. The LLM will probably give you what you requested.

What is LangChain Expression Language?

A declarative language called LangChain Expression Language (LCEL) makes it simple for engineers to connect chains. It was designed from the ground up to make it easier to put prototypes into production without changing the code.

- Any chain created with LCEL can be called via the asynchronous API (like a LangServe server) or the synchronous API (like in an experimentation Jupyter notebook). This gives great speed and flexibility to handle multiple concurrent requests on the same server when using the same code for prototypes and production.

- It is possible for a data scientist or practitioner to execute LCEL chain steps concurrently.

- Whatever chain created with LCEL can be swiftly deployed by LangServe.

Why consider using LangChain?

With the LangChain framework, programmers can design agents that can deconstruct larger tasks into smaller ones and reason about them. With LangChain, you can use intermediate stages to add context and memory to completions by chaining together complex commands.

Using LangChain, developers can rely on LLMs to produce each step and ask each of these questions. So, you no longer need to spend time providing input and manually organizing these phases.

Why is LangChain captivating the industry?

The intriguing thing about LangChain is that it enables teams to add context and memory to already-existing LLMs. They are able to perform increasingly difficult tasks with increased accuracy and precision by artificially adding “reasoning.”

Because LangChain offers an alternative to dragging and dropping elements or using code to create user interfaces, developers are excited about this platform. Users can simply ask for what they want.

How LangChain works?

Hugging Face, GPT3, Jurassic-1 Jumbo, and other language models are just a few of the many language models that LangChain supports. It was developed in Python and JavaScript.

It is necessary to first create a language model in order to use LangChain. This entails building your own model or utilising an openly accessible language model like GPT3.

After finishing, you can use LangChain to create applications. A variety of tools and APIs provided by LangChain make it easy to connect language models to outside data sources, engage with their environment, and create complex applications.

It does this by connecting a series of elements known as links to form a workflow. Every link in the chain performs a particular function, such as:

- Formatting of user-provided data

- Making use of a data source

- Making reference to a language model

- Handling the output of the language model

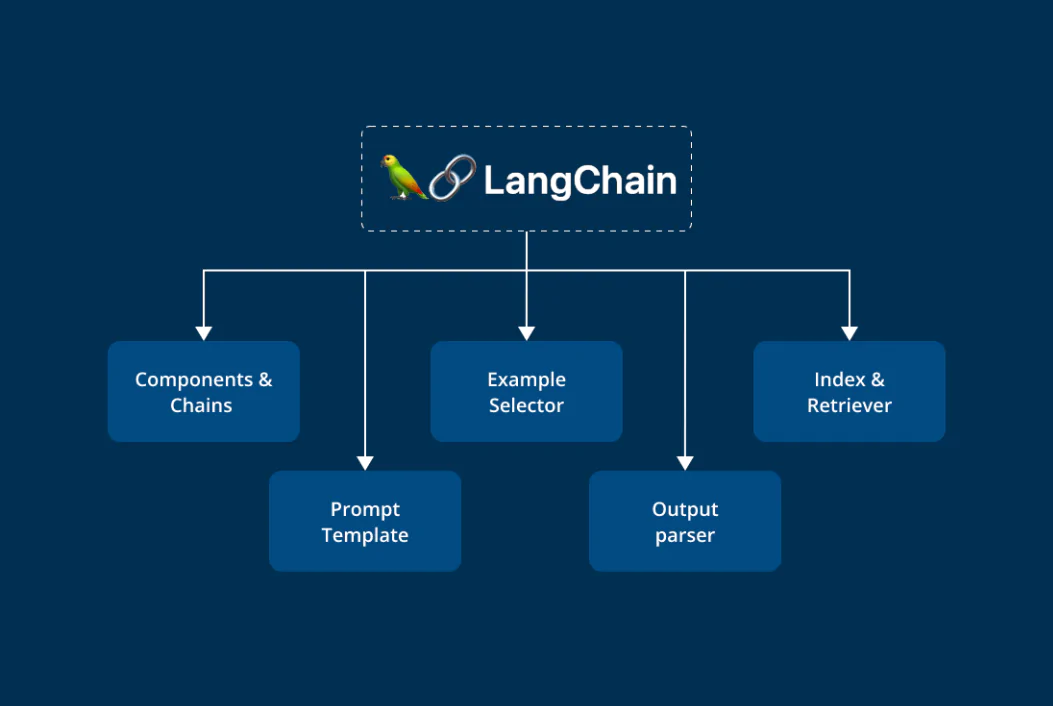

What are the fundamental components of

LangChain?

LLMs

LangChain, of course, requires LLMs, or large language models, which are trained on enormous text and code datasets. Among other things, you can use them to create text, translate between languages, and respond to inquiries.

Prompt templates

To format user input so that the language model can understand it, prompt templates are utilised. They can be used to explain the task that the language model is supposed to perform or to set the scene for the user’s input. For instance, a chatbot’s prompt template may contain the user’s name and query.

Indexes

Databases called indexes contain details about the LLM’s training set. The text, connections, and metadata of the documents can all be included in this data.

Retrievers

Algorithms known as retrievers search an index for particular information. They can be used to find documents most similar to a given file or documents pertinent to a user’s query. Retrievers are essential for improving the accuracy and speed of the LLM’s responses.

Parsers for output

The formatting of the responses that LLM output parsers produce is their responsibility. They can add more information, change the response’s structure, or remove any unwanted content. To make sure that the LLM’s responses are easy to understand and implement, output parsers are essential.

Benefits of using LangChain

Here are some benefits of LangChain

Scalability: Applications that can process enormous amounts of data can be developed using LangChain.

Adaptability: The framework’s versatility enables the development of a broad range of applications, such as question-answering systems and chatbots.

Extensibility: The framework’s expandability allows developers to incorporate their own features and functionalities.

Simple to use: LangChain provides a high-level API for integrating language models with a range of data sources and creating intricate apps.

Open source: LangChain is a freely available framework that can be used and altered.

Vibrant community: You can get help and support from a sizable and vibrant community of LangChain developers and users.

Advance Features of LangChain

Some of the main features of LangChain include the following:

- Personalized prompts that adapt to your needs.

- Constructing chain link elements for sophisticated applications.

- Customizing code to create distinctive applications.

- Model integration for accessing top-notch language model applications such as text-davinci-003 and data augmentation generation.

- Adaptable components that can be combined or separated to meet specific needs.

- Setting and directing context through context manipulation for better accuracy and user experience.

What kind of apps can you build with LangChain?

Creating and condensing content

For the purpose of creating summarizing systems that can generate summaries of blog posts, news articles, and other types of text, LangChain is useful. Content generators that produce interesting and useful writing are another common use case.

Chatbots

Naturally, chatbots and other question-answering systems are excellent use cases for LangChain. Chatbots are capable of answering questions, offering assistance to customers, and producing original text in the form of emails, letters, screenplays, poems, code, and more.

Data Analysis Tools

Data analysis tools that help users comprehend the connections between different data pieces can also be made with data analysis software like LangChain.

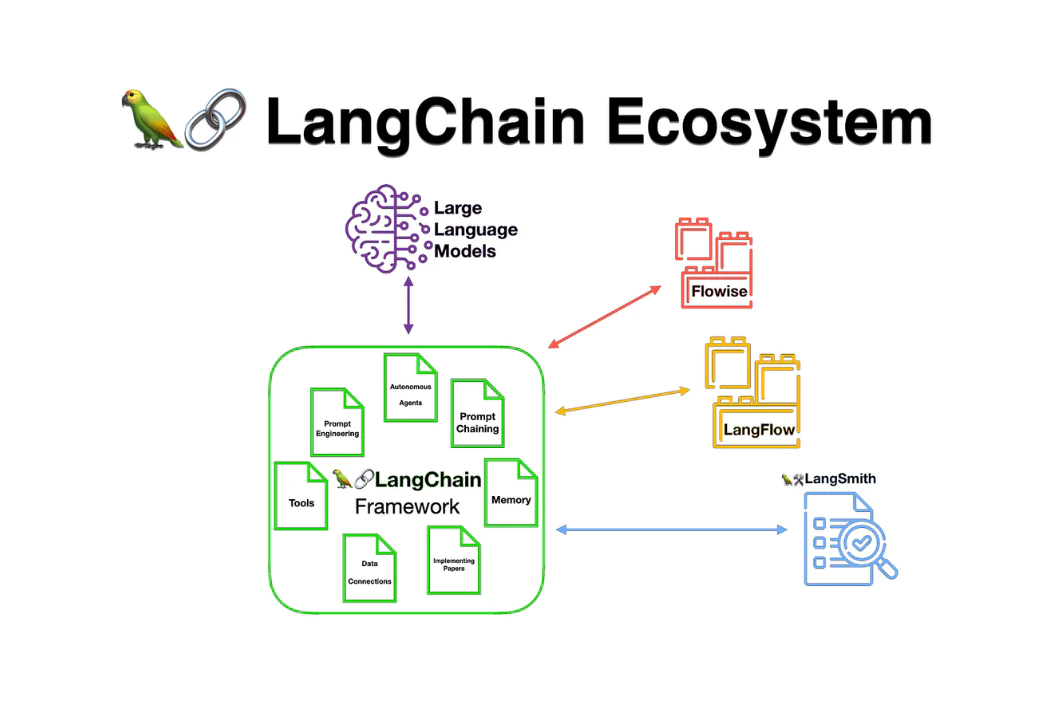

What are Integrations in LangChain?

You can work with a variety of platforms, data sources, and programming languages more easily by using LangChain’s end-to-end chains integration.

This guarantees that you can easily develop applications in the environment of your choice using a language model.

A summary of the main integrations that LangChain offers is as follows:

- LLMs (large language models): Hugging Face, Anthropic, OpenAI, and others.

- Cloud platforms: Google Cloud, Amazon, Azure, and additional well-known cloud service providers

- Data sources include Wikipedia, Apify Actors, Notion, Google Drive, and more.

You can build more complex and adaptable language model-based applications by leveraging these integrations.

Is LangChain open-source?

Yes, using LangChain is completely free as it is an open-source project. The source code is available on GitHub, where you can use it to make your own applications.

Wrap up: the future of LangChain

Currently, chat-based apps on top of LLMs (especially ChatGPT), also known as “chat interfaces,” are the main use case for LangChain. The company’s CEO, Harrison Chase, stated in a recent interview that the ideal use case at the moment is a “chat over your documents.” To enhance the conversation experience for applications, LangChain also offers extra features like streaming, which entails delivering the LLM’s output token by token as opposed to all at once.

Conclusion

LangChain provides an all-inclusive method for creating applications driven by LLMs and generative models. Developers can use multiple components, prompt templates, and vector databases to create creative solutions that go beyond traditional metrics by integrating fundamental ideas from data science.

Agents incorporate increasingly complex components, such as chat interfaces, as technology advances, providing more thorough support across a range of use cases.

FAQ

What is the purpose of LangChain?

An open-source framework called LangChain is used to create applications based on large language models (LLMs). Large deep learning models known as LLMs are pre-trained on vast amounts of data and are capable of producing answers to user queries, such as generating images from text-based prompts or providing answers to questions.

Is LangChain used by ChatGPT?

Developers can mimic ChatGPT features, like chatbots and Q&A systems, using LangChain instead of utilizing the unofficial API.

What makes LangChain such a hit?

It makes it easier to organize enormous amounts of data so that LLMs can access it quickly and enables LLM models to generate responses based on the most recent data that is available online. This is how developers can create dynamic, data-responsive applications with LangChain.

Learn more about AI Tools and Latest Blogs.